The experiment failed, but please publish anyway

Listen

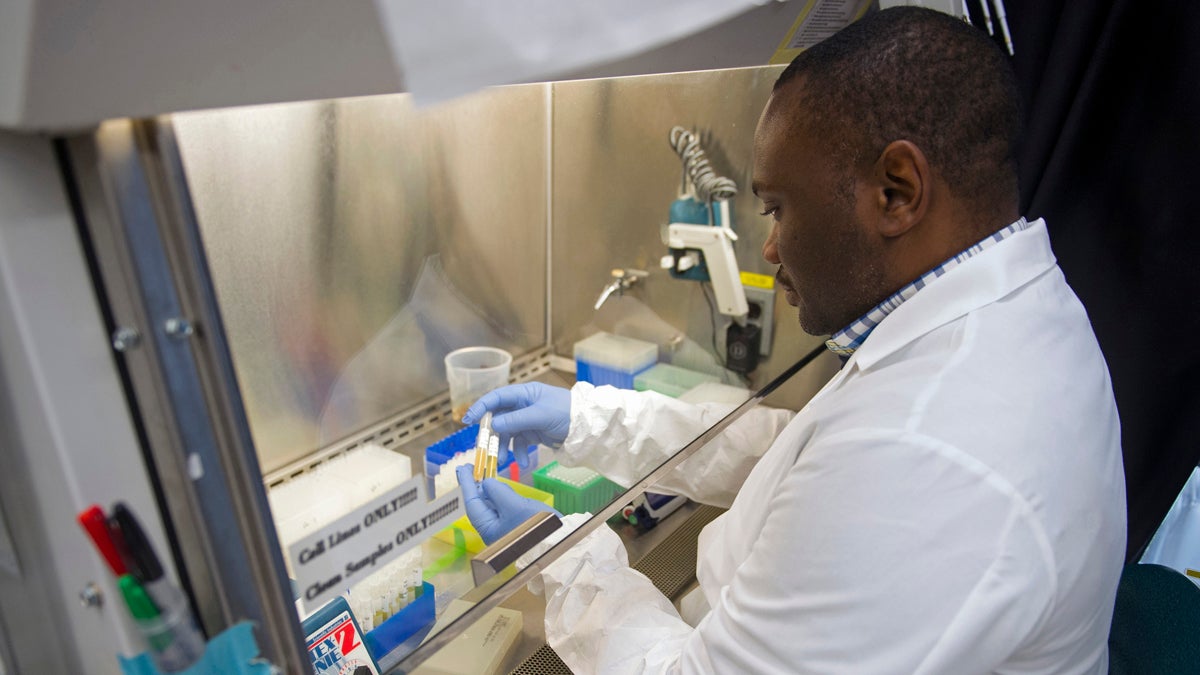

Biologist Olivier Mbaya works with serum samples from healthy volunteer participants in a European study of an experimental Ebola vaccine, at the Vaccine Research Center at the National Institutes of Health in Bethesda, Md. It took 16 years of twists and turns. Over and over, Dr. Nancy Sullivan thought she'd finally gotten her Ebola vaccine right, only to see the next experiment fail. But it was those failures that Sullivan credits for helping her unravel enough mysteries of the immune system. (Cliff Owen/AP Photo)

Not associated. No effect. Evidence against.

These are not words that typically make it into the published scientific literature. But increasingly, researchers are arguing that they should.

A negative results pioneer

When Harvard cell biologist Bjorn Olsen told his colleagues about his idea to start the Journal of Negative Results in Biomedicine about 15 years ago, the response was not encouraging.

“They were laughing,” he recalls. “And I laughed with them.”

But the more he thought about it, the more it was clear that such a journal was necessary. They might not be sexy or exciting, but negative results — findings that don’t support a scientist’s hypothesis — are hugely important. In fact, negative results are the only ones that can provide definitive proof.

“In the end, you can only prove when a hypothesis is wrong,” says Olsen, “by getting the negative data that forced you to discard it.”

The most famous case of a successful negative result occurred in Cleveland in 1887. Arthur Michelson and Edward Morley, a pair of American scientists, tried but failed to find any evidence for the prevailing idea that light waves traveled though a substance called ether. The result paved the way for Albert Einstein to develop his theory of special relativity. In recognition, Michelson received the Nobel Prize in physics.

Kudos for failed experiments, however, are few and far between. And many aren’t even written up.

A lack of transparency

“Only about a half of randomized control trials reach publication,” says Kay Dickersin, a professor at the Johns Hopkins Bloomberg School of Public Health. “That means people have enrolled in studies and have agreed to be tested using different interventions, and that knowledge never reaches the public domain.”

Since the 1980s, Dickersin has been studying the positive publication bias. The biggest advance, she says, isn’t a journal, but a database that most clinical researchers seeking FDA approval must use.

“The data that’s in ClinicalTrials.gov is better than what you would get in a publication,” she says. “It’s in a standardized format, and so you know what you’re getting because it’s required. There can be less missing data and so forth.”

Compliance has been an issue, she acknowledges. But it’s a step toward getting all results — especially if a new drug didn’t work — out in the open.

Skewing the truth

That type of evenhanded reporting, however, doesn’t apply to basic biomedical research — the initial discoveries that are mined to develop new therapies. And for Glenn Begley, the chief scientific officer for the Malvern-based biotech company TetraLogic, that’s a problem.

“It distorts the reality so we think something is more positive than it really is,” he says. “Simply because the negative results are not available for everyone to review.”

Begley previously led the hematology-oncology research program at Amgen, another pharma company. Frustrated by the high failure rate of cancer drugs, he decided to see how many “landmark” preclinical studies the group had been able to replicate over his decade of working there.

Remarkably, only six of 53 studies, could be reproduced, even after consulting with the original investigators. Begley had expected some discrepancies; after all, he was dealing with finicky biological systems. This estimate, though, suggested that nearly 90 percent of the literature was wrong.

“Honestly, I was shocked with this finding,” he says. “I had spent 20 years in academic research before I joined industry, and during that time I had assumed that the majority of the literature was correct.”

According to Begley, the finding explained a big part of why drug development is so slow.

“The job is hard enough as it is without taking false leads and wasting time,” he says.

Fighting back with (more) science

Diligent reporting of negative findings, of course, won’t solve science’s reproducibility crisis. But it could cut down on the number of labs squandering resources, only to discover the same dead end. Many researchers think it could make science faster and more efficient.

Guiding the way to better scientific practice is metascience, the study of science itself. In trying to understand what drives publication bias, Daniele Fanelli of the Meta-Research Innovation Center at Stanford has looked at how frequently negative results are reported in each scientific discipline.

“When you move from the physical to the social sciences, you see the proportion of positive results reported as opposed to negative, increasing,” he says. “And increasing very dramatically.”

The top offenders? Psychology and psychiatry, which publish findings supporting the tested hypothesis 95 percent of the time.

To Fanelli, this suggests there are different reasons, specific to each field, for why negative results don’t generally see the light of day. For biologists and geneticists, it could be not taking the time to write up a paper for a “failed” project. For social scientists, he says, “it is more that researchers have many degrees of freedom within their theories and methodologies to build the study that will produce the results they expect.”

Both are problematic, but require different remedies — not all of which are yet clear.

In recent years the number of specialized journals for negative results has grown to include ecology, chemistry, and pharmaceuticals. Publishing giant Elsevier recently committed to the online title, New Negatives in Plant Science. Others, including the journals PLOS ONE and Scientific Reports, explicitly state in author guidelines that negative results will be considered.

But if finding an outlet were the only problem, Fanelli says, those journals should be swamped with submissions.

Olsen, the Journal of Negative Results in Biomedicine’s editor-in-chief, says he gets hundreds to thousands of papers vying for acceptance a year — not much compared to top-tier journals such as Cell, Science, and Nature. But he’s pleased with the progress.

“I think that if this journal, by existing, can get people to think differently when it comes to what should be published in science,” he says, “then I think it has been successful.”

WHYY is your source for fact-based, in-depth journalism and information. As a nonprofit organization, we rely on financial support from readers like you. Please give today.