Scientists have used fMRI to study brain activity for years. Now, some question the results’ reliability

Scientists have found that results can change, brain scans from the same person doing the same thing can be different a week or a month later.

Listen 11:23

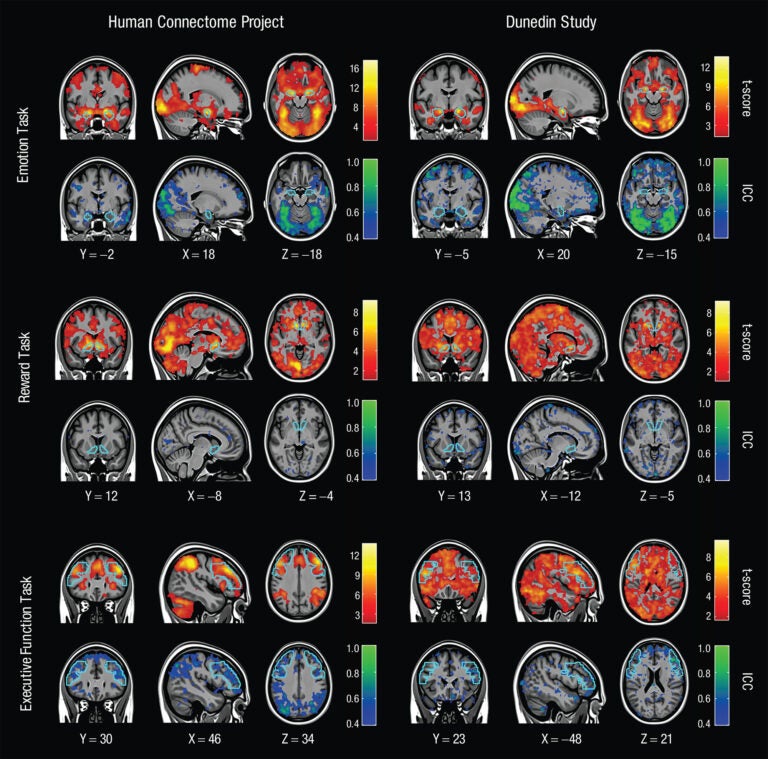

Brain scans showing MRI mapping for 3 tasks across 2 different days. Warm colors show how the results hold up in groups. Cool colors show how results are less reliable person to person. (Annchen Knodt/Duke University)

This story is from The Pulse, a weekly health and science podcast.

Subscribe on Apple Podcasts, Spotify or wherever you get your podcasts.

Scientists have studied living human brains using fMRI for years, to answer questions about addiction, depression, and also whether a bad breakup hurts as much as spilling hot coffee over yourself. There have been so many studies of which part of the brain “lights up” when a research subject does something, the phrase has become a bit of a cliche, despite it’s not being entirely accurate.

Functional magnetic resonance imaging is an indirect measure of brain activity: Blood that carries more oxygen behaves differently in a magnetic field than blood that carries less oxygen. An active area of the brain would need more oxygen, so tracking the blood with more oxygen is an indirect measure of which parts of the brain are active.

For years, scientists also have tried to explain just what fMRI can, and cannot, measure. Now, several groups of scientists have looked back at fMRI research to figure out how reliable the findings are, which means they want to know whether a researcher can measure something and get the same result each time, said Stephanie Noble, a computational neuroscientist at Yale, who has written about the recent findings.

Scientists have found these results can change. Noble said brain scans from the same person doing the same thing can be different a week or a month later, which is unexpected.

“We know that your brain anatomy is not changing, if you have a cerebellum in one place in your brain, it’s not going to be in a completely different place,” she said. “But … as we’re measuring you in the scanner, you might be thinking about what you had for lunch, you might be making plans for the future, you might be really sad about something that happened that day. But if we measure you the next week, maybe you’re on vacation … you’re in a very different cognitive state, so there’s definitely this tension between things that are fixed and things that are changing.”

Subscribe to The Pulse

Think about two copies of the same picture, she said — both blurry, but blurry in slightly different ways. Not every pixel is the same, but they have the same underlying image.

That means researchers cannot answer questions that depend on looking at each individual pixel, but they can still answer questions about the overall pattern.

Another problem is that researchers can sometimes look at the same set of fMRI data and come to different conclusions. That’s because scientists generally agree on what the noise is, or where to clean up the picture, but they do not always agree on which tool or software package should be used to do the cleaning, said David Zald, a neuroscientist and director of the Center for Advanced Human Brain Imaging Research at Rutgers University.

“There are some packages or … tools that a lot of people use; there’s none of them which everyone uses or that everyone agrees is the best way to do it,” Zald said. “ I’ve always figured that if a finding is large enough, it’s going to come through regardless of whether I did this with this tool or that tool.”

New findings that question fMRI research can sometimes lead to gloomy headlines, and this time is no different.

I know that fMRI research has serious replicability problems that need to be addressed, but news headlines like this are deeply harmful to our field, both in terms of public understanding of the work we do and in the way that other neuroscientists see us. https://t.co/DR00cNmmZ9

— Jesse Rissman (@jesse_rissman) June 26, 2020

But it does not mean that the new research invalidates all the years of work with fMRI.

“It’s not necessarily like a big death knell for the field,” said Yale’s Stephanie Noble. She explained the results can still hold at a group level: Results are more likely to be reliable if researchers look at a group of people, usually the more the better.

Other researchers have looked at just how large that group should be to get reliable results, which is an important question because running an fMRI can cost more than $1,000 an hour.

Some scientists use fMRI studies to try to link brain activity to behavior or mental health, to answer questions such as what does the brain activity of someone who has depression look like?

Scott Marek, a neuroscientist at the Washington University School of Medicine, looked at existing work like that to see how reliable those findings are. If a study tries to answer those types of questions with a small sample size, he said, “it’s hard to know whether they’re right or wrong.”

“At the very least, what we’ve shown is even if they do correctly conclude that there is a relationship, it’s likely that that relationship is inflated,” Marek added.

Marek and his colleagues took two of the largest fMRI datasets they could find, with tens of thousands of people. They asked research questions about fMRI scans and mental health, and tried answering them using different sample sizes of 10, 20, 100 people … up to tens of thousands. They found that if scientists want reliable results for those questions, they need to study thousands of people.

Time for a course correction

That’s not great news for some researchers. But Marek’s research partner, psychologist Brenden Tervo-Clemmens at the University of Pittsburgh, stressed they are not saying the fMRI research with small samples is bad. They are saying that scientists who did this work in the past, including themselves, should think about repeating their research with more people.

“We can’t point to a single paper, a single author and say that … work is incorrect,” he said. “All we can do is kind of call for a significant change in how these studies are done.”

Another solution is to work with other researchers to try and agree on the best ways to reduce the noise from fMRI, or clean up data using different sets of tools to make sure the results are the same, said David Zald of Rutgers.

Researchers will learn from their mistakes and get better over time, which is how science works, Marek said.

“We’ve been at this as a field for 30 years. Fields like astronomy and cosmology have been at it for 500 years, genetics has been at it for decades longer than us,” he said. “Just because you have a finding that indicates that you need a course correction, I don’t think that that’s a reason for pessimism, I think that’s a reason for optimism.”

WHYY is your source for fact-based, in-depth journalism and information. As a nonprofit organization, we rely on financial support from readers like you. Please give today.