Babies are ‘teaching’ robots how to navigate the world

Listen

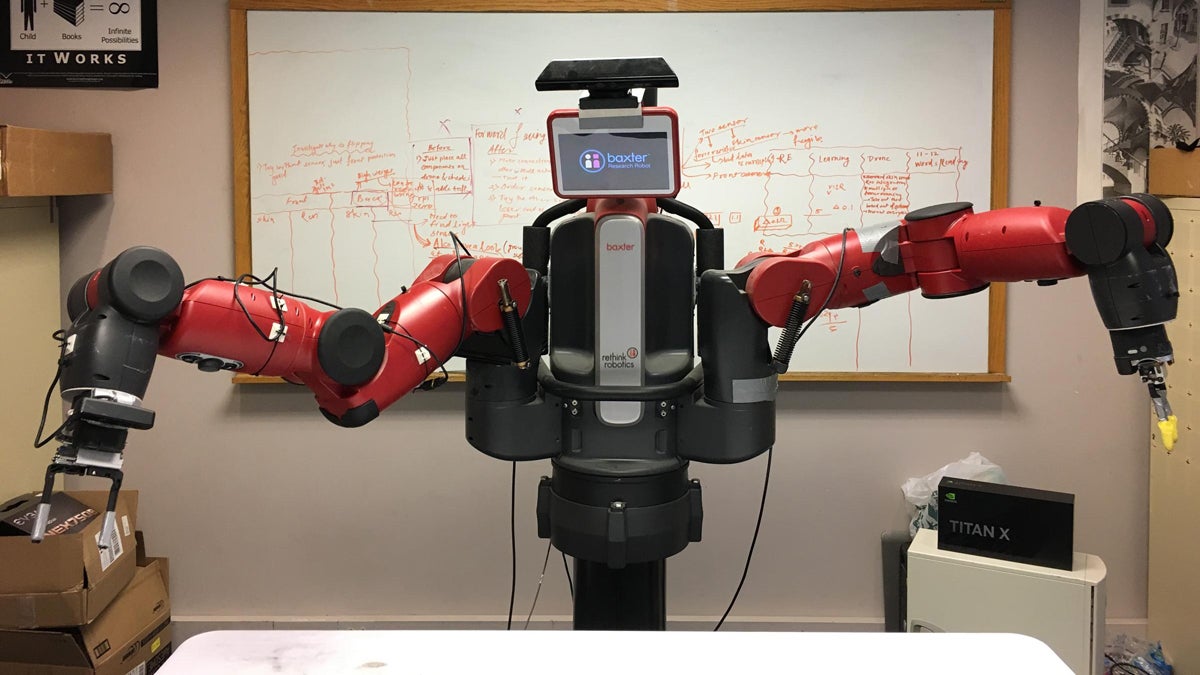

A Baxter robot at Carnegie Mellon University. (Larkin Page-Jacobs/ WESA)

Robots are great at doing a lot of things, but they have trouble interacting with the physical world.

Roboticists in Pittsburgh are tackling that challenge—and looking to infants for inspiration.

Eleven-month-old Josie sits on the floor of her nursery in Lawrenceville, amidst a sea of blocks, puzzle pieces, and plastic balls. Her mom, Acadia Klain sat near her and said over the past month or two, Josie’s begun to understand that she can control what an object does and where it goes.

“She’s really figured out how to grab things and use them and direct them,” Klain said.

Even small objects–like cereal.

“One thing that’s changed recently is the pincer grip. So that she can pick up Cheerios on her own now. A single Cheerio,” she said.

The basic hand-eye coordination that comes naturally to babies is actually very complicated. Roboticists know just how complex it is because they’ve tried to program machines to handle seemingly simple tasks.

Carnegie Mellon University assistant professor Abhinav Gupta explained that, in order to pick up an object, first you have to understand what it is, how much it weighs, and how it’s shaped. Then you have to use your eyes, arms and hands to move it.

Babies aren’t born with the ability to manipulate objects–they learn through practice.

“And that’s why we started this effort to basically learn how to see by having robots interact with the world essentially,” Gupta said. “Baby robots, yeah.”

That means robots need to spend even more time–thousands of hours–touching, prodding and lifting things. So Gupta installed a robot in a big public room in Smith Hall at Carnegie Mellon, where any passersby could interact with it by placing random objects in front of it.

The robot, which is about six feet wide and six feet tall, has two long, jointed arms, and a tablet for a face. On the end of each arm are two fingers coated in sensors, and it has a bunch of cameras to “see” objects from different viewpoints.

Putting the robot in a shared space with a range of objects gave the scientists a diverse set of data–and it’s those data that help a robot learn how to pick up an object. Every time the machine uses its fingers to manipulate something, a data point is created. It doesn’t matter if it fails to pick it up, drops it, or actually lifts it in the air–each interaction is information. Over time, those data help the robot learn exactly what it needs to do to be successful.

This robot, however is stationary. It can’t navigate the world. So, Gupta said they’re working on a new robot that flies and smacks into things on purpose.

“Our goal is to learn about any possible object in the world. We have built a drone with similar kind of sensors that the current robot has,” Gupta said. “So this drone has a skin all around the hull and the goal of this drone is to just go and hit as many objects as it can in the world.”

So far, the scientists have crashed it into things 10,000 times. Gupta said eventually they want robots to make the connection between images of objects, such as a chair you would see in an Internet search, with a chair it might encounter in real life, and how it’s used.

“The ultimate goal is to build a machine that can live in your home and interact with the objects exactly the way humans are doing. And you can throw any new object in front of it. By just exploring objects on its own, it can figure out how to use it, what do you do with it, and so on,” Gupta said.

Right now a toddler can easily out-maneuver the robots Gupta and his team have built, but the robots are learning, and the scientists are designing more robots that will accumulate and share more data.

Eventually, it could be a robot that helps your infant figure out how to put blocks in a bin–or eat Cheerios.

This story originally aired on WESA.

WHYY is your source for fact-based, in-depth journalism and information. As a nonprofit organization, we rely on financial support from readers like you. Please give today.